The MCP AuthN/Z Nightmare

05 Mar 2026 - Posted by Francesco Lacerenza

This article shares our perspective on the current state of authentication and authorization in enterprise-ready, remote MCP server deployments.

Before diving into that discussion, we’ll first outline the most common attack vectors. Understanding these threats is essential to properly frame the security challenges that follow. If you’re already familiar with them, feel free to skip to the section “Enterprise Authentication and Authorization: a Work in Progress” below.

Huge shoutout to Teleport for sponsoring this research. Thanks to their support, we have been able to conduct cutting-edge security research on this topic. Stay tuned for upcoming MCP security updates!

At this stage, introducing the Model Context Protocol (MCP) would be redundant since it has already been thoroughly covered in the recent surge of security blog posts.

For anyone who may have missed the conversation, here’s a brief recap:

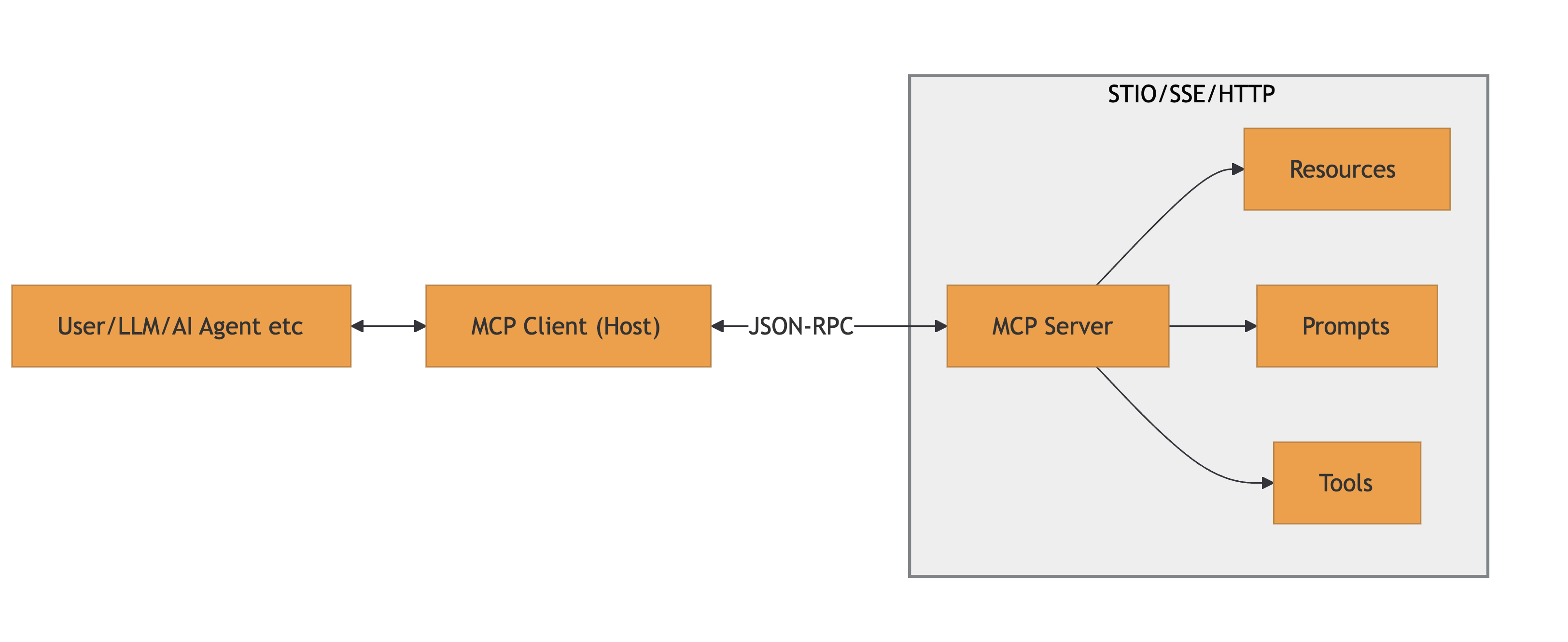

MCP is a protocol used to connect AI models to: data, tools and prompts. It uses JSON-RPC messages for communication. It’s a stateful connection where clients and servers negotiate capabilities.

A high-level architecture is provided below:

References: MCP Specification and MCP Architecture

MCP Attack Vectors

Several categories of vulnerabilities pertaining to MCP emerged in the wild. While it might not fit every bug you read about, as things are changing on a daily basis, a good starting point is the good and not-so-old OWASP MCP Top 10.

Below are the most relevant vulnerabilities we have encountered so far, organized by the malicious actor profile:

Malicious MCP Server

Rogue MCP servers could intentionally exploit clients with:

- Tool Poisoning: The server provides malicious tool definitions or modifies them after user approval. Sub-categories and variations of the attack:

- Rug Pulls: A server presents benign capabilities during initial

tools/listcall, then switches to malicious ones during execution or in subsequent MCP messages - Tool Shadowing: A malicious server injects tool descriptions that modify the agent’s behavior with respect to a trusted tool

- Schema Poisoning: Corrupting interface definitions to mislead the model. The schema is used by MCP clients to validate the tool inputs and outputs and to let the model know what is required to interrogate them

- Rug Pulls: A server presents benign capabilities during initial

- Prompt Injection via Tool Responses: The server returns malicious instructions embedded in MCP responses to normal actions, which the client’s LLM then executes

- Data Exfiltration via Resources: Malicious servers exposing resources that leak sensitive client information etc.

It should be highlighted that the the listed attacks are exploitable by either local or remote MCP servers. Of course, the outcome varies drastrically in terms of achievable impacts.

Malicious MCP Client

Rogue MCP clients could intentionally exploit servers with:

- Command Injection: Crafted MCP Message inputs sent to vulnerable MCP servers that do not properly sanitize - allowing arbitrary command execution (mostly in a old-fashioned way)

- Examples:

CVE-2025-53100(RestDB’s Codehooks.io MCP Server),CVE-2025-53818(GitHub Kanban MCP Server)

- Examples:

- Context Injection & Over-Sharing: Servers that do not properly isolate context, allowing exfiltration of sensitive information from other users/sessions

- Prompt Injection: The MCP Server could receive malicious prompts from the client, which would then modify its behavior to execute the requested tasks

Other Malicious Actors

Beyond the traditional client-server factors, an MCP ecosystem could also be compromised by:

- MCP Proxies/Gateways: Intermediary systems (like MCP proxies) used for routing and authorization of MCP. These could alter passing MCP messages or simply be vulnerable to policy bypasses. You might be surprised by the number of MCP Gateways out there

- Single-Sign-On (SSO) Intermediaries: MCP servers using OAuth 2.0/2.1 for authorization rely on discovery endpoints (

.well-known/oauth-authorization-server) and dynamic client registration. Malicious actors could exploit these intermediaries by injecting fake metadata, manipulating redirect URIs, or compromising the registration endpoint to obtain unauthorized client credentials (e.g.,CVE-2025-4144- a PKCE bypass inworkers-oauth-provider,CVE-2025-4143- improperredirect_urivalidation)

The Nightmare: New Actors, New Problems to Solve

Securing SSO remains an open challenge for the industry due to its intrinsic complexity. The past few years have highlighted this reality, with a steady stream of severe vulnerabilities affecting OAuth2, OIDC, SAML and SCIM implementations.

Yet, progress never stops and authentication & authorization in MCP are the new inevitable nightmare. Being a relatively new protocol, the standards for how clients and servers should establish trust are still evolving, leading to a fragmented ecosystem.

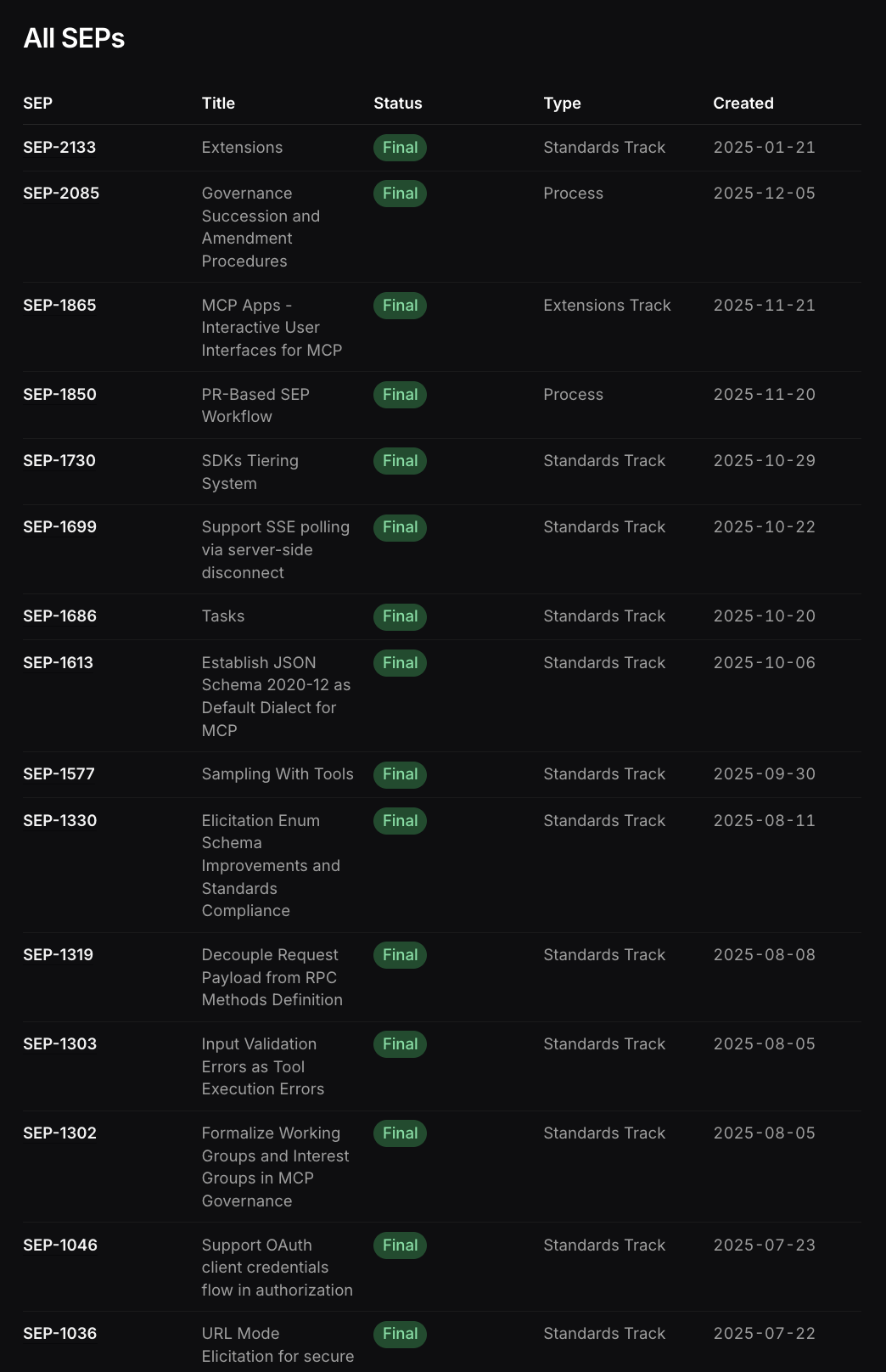

The specifications for AuthN/AuthZ are subject to continuous changes and extensions, as is common for newborn protocols. This instability means that today’s “secure and compliant” implementation might be deprecated or insufficiently secure tomorrow.

Just few of the latest Specification Enhancement Proposals (SEPs) in MCP

Multiple significant issues have been emerging in the MCP SSO implementation, many as descendants of the common OAuth2/OIDC vulnerabilities, but also new ones.

We have seen browser-based clients or open() URL handlers exploited to launch arbitrary processes or redirect to malicious servers, showing the fragility of the MCP client-side implementation, often linked to automatic action executors.

Then, attacks against the new metadata discovery and old-school metadata endpoints:

- Protected Resource Metadata (PRM) documents injected with malicious URI schemes

- OIDC Discovery endpoints manipulated to redirect flows

Notable mentions around the cited scenarios are: CVE-2025-6514, “From MCP to Shell”, CVE-2025-4144, CVE-2025-4143, CVE-2025-58062

Furthermore, many implementations (like IDE extensions and CVE-2025-49596) assumed localhost was secure, starting WebSocket servers without auth, allowing any local process (or malicious website via DNS rebinding) to connect.

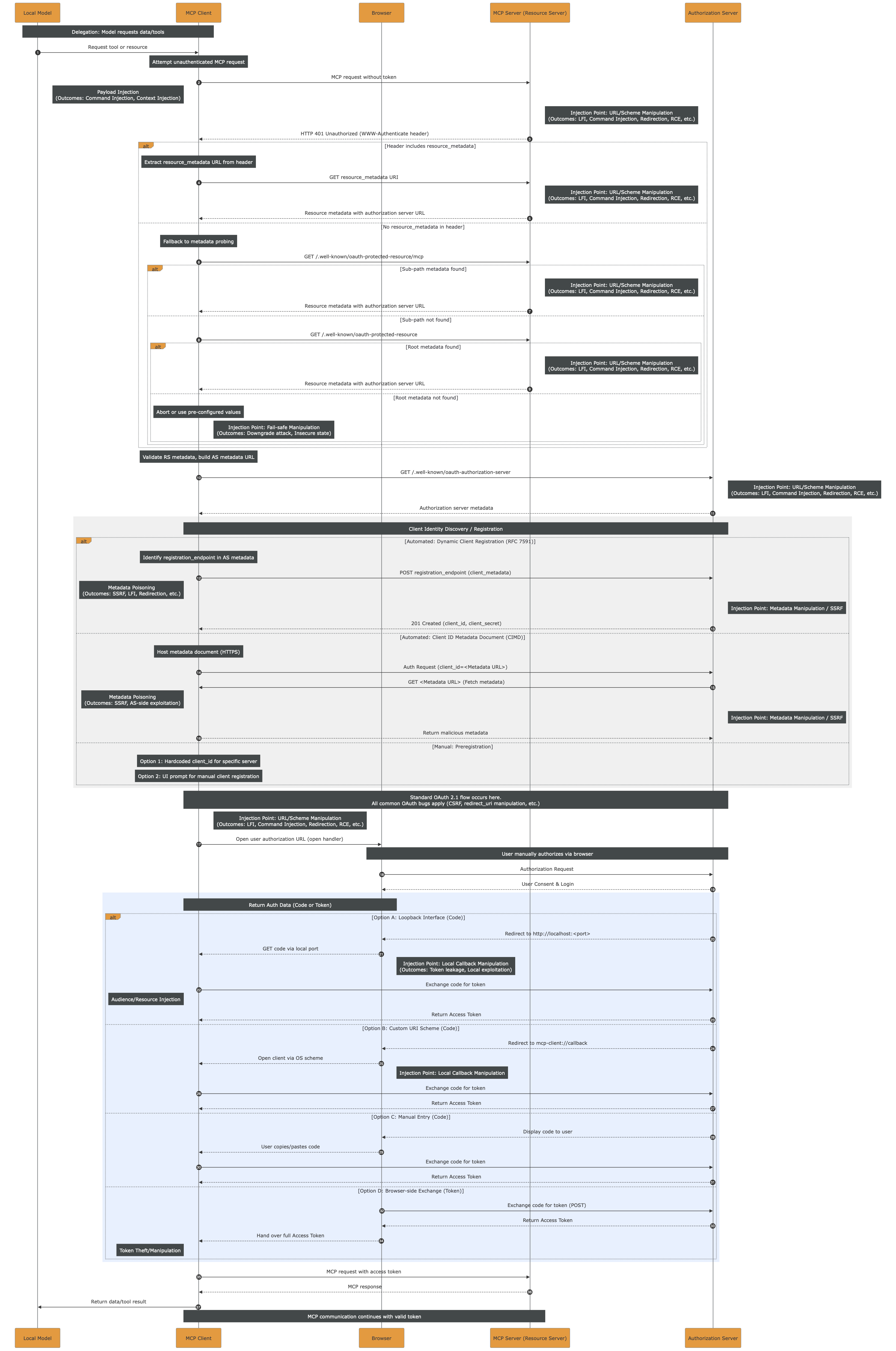

While keeping up with the latest news is pretty complex, time consuming and not always possible, we attempted to sum-up the potential injection points affecting the current MCP Authentication via OAuth2 and dynamic client registration.

A Scary Sequence Diagram

The monolith sequence diagram below embodies the title of this post. It should serve as a reminder of how extensive the attack surface is and how many injection points exist. One could argue that “every step is an injection point” and that would not be inaccurate. However, the goal here is to illustrate the full length of the authorization flow, from start to finish, highlighting the many branches, variations, and opportunities for subtle yet impactful vulnerabilities.

The high-resolution PDF file can be downloaded here.

While prompt injection requires a different approach, most of the injection points and impactful outcomes, such as LFI, RCE, etc. could be prevented by strictly applying sanitization and validation of the inputs. Still, the monolith highlights how complex it is to do so, given the length and variety of actors throughout the entire flow.

Enterprise Authentication and Authorization: a Work in Progress

In the OAuth specification, there is scope consent by the user at the time of authorization.

The user HAS to see and approve the exact scopes for each third-party tool/app/etc. before any token is issued by the IdP.

Currently, there is no homogeneous way to manage MCP security across an enterprise. While individual MCP tools struggle with authentication and often just rely on secret tokens, the Enterprise-level authN/Z is a whole other challenge.

In fact, in enterprise-managed authorization the scope consent is decoupled from the time of authorization.

As an example, an MCP client with enterprise authorization could be accessing Slack and GitHub on behalf of the user, but the user never explicitly consented to

github:readslack:writein a consent screen. The local agent decided the task and the scopes required, and the enterprise policy enforcement decided to allow it on behalf of the user based off their MCP Client identity.

Down that path, there is intermediary tooling trying to offer a partial solution such as MCP Proxies/Gateways. While they are extremely useful at aggregating MCP severs under the same centrally-managed authentication and authorization layer, they are still not solving the problem rising with dynamic scopes and plug-and-play third-party tools/apps.

On the other side, there are active discussions around a native Enterprise-Managed Authorization Extension for the Model Context Protocol. During our research on the matter, we had the possibility to do a deep dive into a current draft of the extension, which relies on the Identity Assertion JWT Authorization Grant (JAG). Given our exposure to real-life security engineering challenges faced by our clients, we decided to take a step further and offer our feedback on the draft. We strongly suggest reading the Extension Draft and Doyensec’s pull-request with the updated Security Considerations.

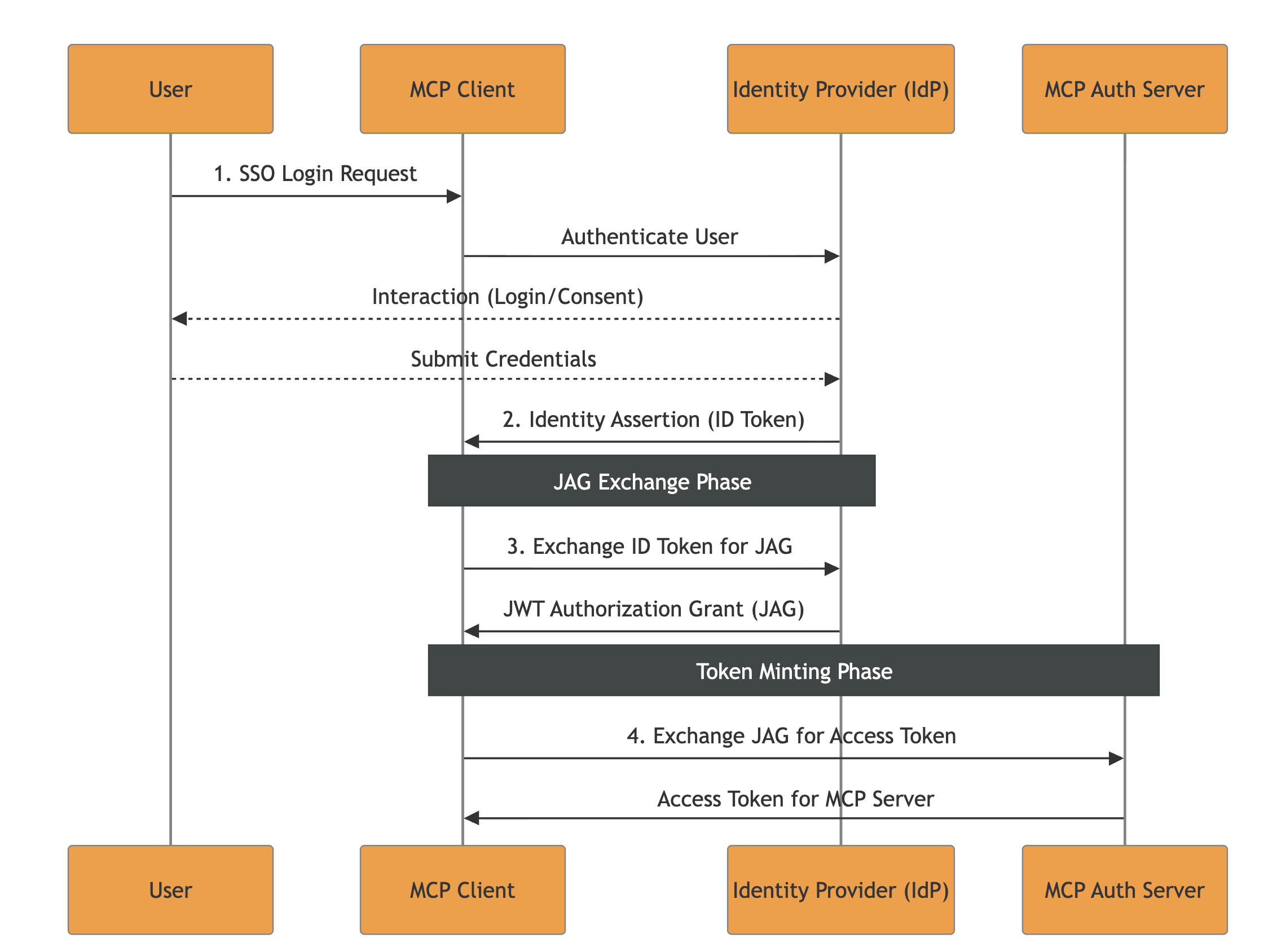

The JAG Problem (Identity Assertion JWT Authorization Grant)

The following summarizes the JAG approach and our considerations. For readers interested in understanding all the aspects in great depth, we would recommend reading the full draft before continuing.

The main idea of this specification revolves around leveraging existing Enterprise Identity Providers (IdPs), such as Okta or Azure AD.

The flow’s key-points are:

The current specification introduces a few outstanding challenges:

1. Access Invalidation Problem

There are three level of tokens issued throughout a correct execution of the flow:

- ID Token from IdP

- ID Token For the Grant (JAG ID) from IdP

- MCP Access Token from the MCP Authorization Server

The proposed specification does not explicitly describe mechanisms for invalidating access to an MCP client or revoking issued tokens / ID-JAG.

MCP-Specific Note: While the access invalidation is also unspecified in the parent RFCs, the high risk associated with non-deterministic agentic accesses to tools and resources should require an access invalidation flow for the Enterprise context. Otherwise, the enterprise processes being authorized with the above mentioned spec would not have a clear emergency recovery pattern whenever agents start misbehaving (e.g., injections and other widely known attacks). Consequently, every actor could end-up proposing its own recovery pattern, bringing ambiguity and implementation differences.

2. LLM Scope Abuse Without User Consent

In JAG, the IdP issues an ID Token with no scopes embedded. It just states the identity of the user to allow impersonation from the MCP client. When the MCP client requests a JAG for high-risk scopes like github:write slack:write, no consent pop-up is triggered. The enterprise policy decides on behalf of the user being impersonated.

MCP-Specific Note: While this is totally normal in a classic Machine-to-Machine (M2M) environemnt where the enterprise users are expected to be directly mandating specific tasks on their behalf to automation software, that standard does not apply to the MCP field.

The tasks and actions list being transformed into MCP interactions are not directly chosen deterministically from the end-user.

In general, the consent requirement bypass offered by JAG would allow LLMs to autonomously request any scope permitted by enterprise policies, even if it’s irrelevant to the user’s current task, removing the human-in-the-loop for high-risk actions.

3. How the IdP Creates, Distributes and Validates Clients

Within the JAG proposal, it is not declared how the IdP should issue/distribute client credentials (secret vs. private-key JWT vs. mTLS, how they’re delivered, rotation, etc.).

Moreover, it is not declared how important it is for the IdP to ensure that the audience (The Issuer URL of the MCP server’s authorization server) is linked to the resource (The RFC9728 Resource Identifier of the MCP server).

MCP-Specific Note: While such a practice is unspecified in the parent specifications, enterprise architectures are usually based on multiple IdPs managing access to a wide range of resources, often overlapping: e.g., both IdP A and IdP B can authorize access to app C. Within the presented Enterprise MCP scenario, multiple IdPs could be authorizing multiple MCP Authorization Servers (often overlapping), while each of them manages scopes for a range of MCP Servers.

In such context, clearly defining namespaces and required checks on IdPs and MCP Authorization Servers would help preventing implementation issues like:

- Scope Namespace Collision: If Server A and Server B both use common scope names like

files:read,admin:write, etc., the attacker could leverage a low-privilege ID-JAG from Server B to gain access to Server A ifaudis not checked to be the Authorization Server and theresourceas one of the MCP Servers managed by the specific MCP Authorization Server- Resource Identifier Injection: If the MCP Server Authorization Server doesn’t validate that the

resourceclaim in the ID-JAG matches its own registered resource identifier, it cannot distinguish between ID-JAGs intended for different servers. Once obtained an MCP session, the injected value could be lost and irrelevant, allowing cross-access.The IdP must ensure that JAGs for resources not managed by the caller client are not forged.

4. ID-JAG Replay Concern

Whenever a single ID-JAG can mint multiple MCP Server access tokens, and those access tokens can invoke high-impact tools, then the ID-JAG becomes an amplifier of damage. That is why the decision of enforcing single-use checks on the jti should belong within the specification.

Conclusion

Authentication and authorization, especially in the context of SSO and transitive trust across third parties, have historically been a breeding ground for subtle, high-impact vulnerabilities. MCP does not change this reality. If anything, by introducing additional layers of indirection, remote server pooling, and agent-driven workflows, it amplifies the existing complexity. In the near term, we should expect AuthN/Z in MCP deployments to remain a challenging and error-prone domain.

For this reason, both auditors and developers should apply the strictest possible validation at every step of any SSO flow involving MCP. Token issuance, audience binding, scope enforcement, session propagation, identity mapping, trust establishment, and revocation logic all deserve explicit scrutiny. The end-to-end sequence diagram presented in this article is intended as a practical starting point: a tool to reason about the full authorization chain, enumerate trust boundaries, and systematically derive a security test plan. Every transition in that flow should be treated as a potential injection point or trust confusion opportunity.

When it comes to enterprise-managed authorization models, approaches such as JAG raise significant concerns. They introduce complex cross-specification dependencies, expand the number of actors involved in trust decisions, and substantially widen the attack surface. More critically, the model’s reliance on full user impersonation by non-deterministic agents, capable of autonomously selecting and executing tasks without explicit per-action user consent, is misaligned with MCP’s security requirements. Delegation without tight contextual constraints is indistinguishable from privilege escalation when boundaries are not rigorously enforced.

Based on our experience, a more robust direction for enterprise MCP deployments would emphasize strong, explicit trust anchors and protocol minimization. Technologies such as certificate-based authorization and mTLS, adapted specifically to MCP’s interaction model, provide clearer security properties and reduce ambiguity in identity binding. These mechanisms should be complemented by:

- Explicit protections for high-risk or irreversible actions

- Uniform and centralized access invalidation mechanisms for incident response and disaster recovery

- Strict resource namespacing and deterministic scope mapping

- Clear separation between user delegation and agent execution contexts

In short, the goal should not be to replicate the full complexity of traditional enterprise SSO stacks inside MCP, but to reduce implicit trust, constrain delegation semantics, and make authorization decisions auditable and deterministic.

If the industry has learned anything from the past decade of OAuth, OIDC, SAML, and SCIM vulnerabilities, it is that complexity without strong invariants inevitably leads to security gaps. MCP deployments would do well to internalize that lesson early.